We are a digital agency helping businesses develop immersive, engaging, and user-focused web, app, and software solutions.

2310 Mira Vista Ave

Montrose, CA 91020

2500+ reviews based on client feedback

What's Included?

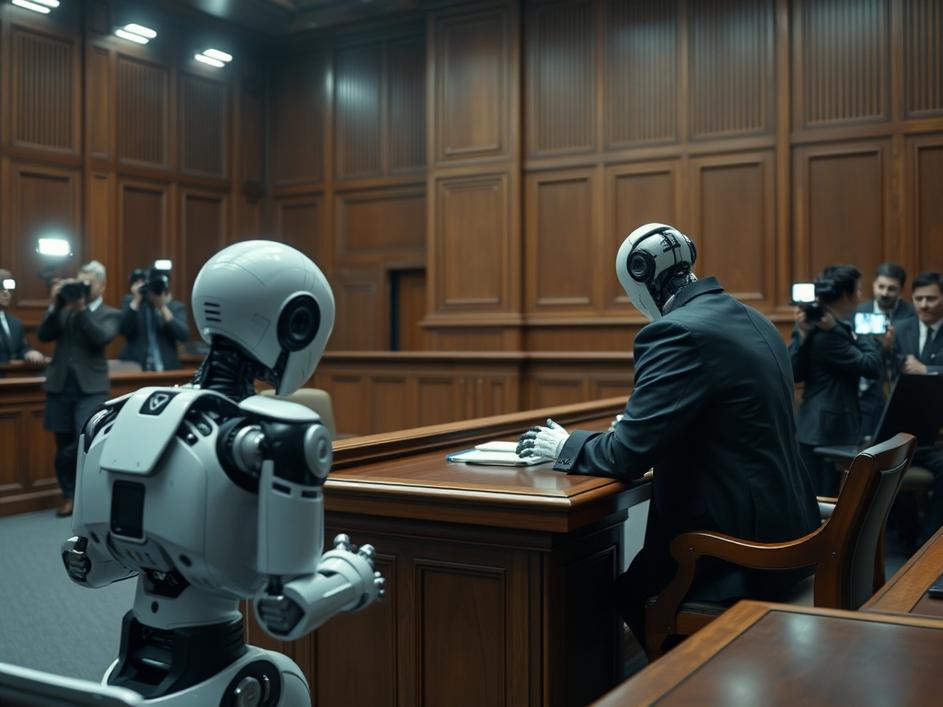

ToggleRecently, there’s been a lot of buzz around a dispute between the Trump administration and Anthropic, a prominent artificial intelligence company. Word on the street is that President Trump himself stepped in, directing all federal agencies to halt their dealings with the AI firm. This move raises a lot of questions about the government’s approach to AI and the future of these partnerships.

The core of the issue seems to stem from a disagreement with the Pentagon. While the specifics remain somewhat unclear, it’s safe to assume that differing visions for AI implementation or concerns over data security and ethical considerations may be at play. The Pentagon, understandably, has strict requirements for any technology it adopts, especially when it comes to sensitive data and national security applications. It’s not unusual for there to be some back-and-forth between government agencies and tech companies as they navigate these complicated waters. However, for the conflict to escalate to the level of a presidential directive suggests that these issues were quite serious.

Anthropic, for those unfamiliar, is a company that’s been making waves in the AI world. They focus on building safe and reliable AI systems, particularly large language models. These models are designed to understand and generate human-like text, which has potential applications in everything from customer service and content creation to research and development. They are known for their focus on “Constitutional AI,” a method to train AI systems to be aligned with human values and ethics. The company has attracted significant attention and funding due to its commitment to responsible AI development. Their technology is seen as promising, but perhaps that promise also comes with perceived risks, depending on who’s evaluating it.

This action against Anthropic might fit into a broader pattern from the Trump administration concerning technology companies. Throughout his presidency, Trump often took a confrontational stance toward tech giants, raising concerns about censorship, bias, and their influence on public discourse. This situation with Anthropic could be seen as an extension of those concerns, perhaps with the added layer of national security implications. It’s also possible that the administration had concerns about Anthropic’s connections or potential vulnerabilities, given the sensitive nature of their AI work. Whatever the exact reasons, the move underscores the government’s growing scrutiny of the AI sector.

So, what does all this mean for the future of AI development and government collaboration? For one, it highlights the challenges and complexities involved in integrating advanced AI technologies into government operations. It also emphasizes the need for clear guidelines and ethical frameworks to govern the use of AI, especially in areas like national security and defense. This incident might cause other AI companies to think twice about partnering with the government, or at least to be more cautious about the terms and conditions of those partnerships. It’s also a reminder that AI is not just a technological issue, but also a political and social one, with significant implications for our society.

This situation definitely throws a wrench into the future of AI partnerships with the government. Companies might be more hesitant to work with federal agencies, fearing similar repercussions or public backlash. The government, on the other hand, might become even more cautious about who it trusts with sensitive data and critical infrastructure. This could lead to a slowdown in the adoption of AI technologies across various sectors of the government, which could ultimately hinder innovation and efficiency.

Ultimately, what’s needed is more transparency and open dialogue about the government’s concerns regarding AI and its potential risks. The public deserves to know why these decisions are being made and what safeguards are being put in place to protect our interests. Without transparency, it’s easy for mistrust and suspicion to fester, which could ultimately undermine our ability to harness the full potential of AI for good. This incident with Anthropic serves as a wake-up call, urging us to have a more honest and open conversation about the role of AI in our society and the responsibilities that come with it.

Finding the right balance between innovation and security is key. We need to encourage responsible AI development while also ensuring that appropriate safeguards are in place to protect our national security and ethical values. This requires a collaborative effort between government, industry, and academia. We need to foster a culture of transparency, accountability, and ethical AI development. By working together, we can ensure that AI benefits all of humanity.

Comments are closed