We are a digital agency helping businesses develop immersive, engaging, and user-focused web, app, and software solutions.

2310 Mira Vista Ave

Montrose, CA 91020

2500+ reviews based on client feedback

What's Included?

ToggleThe Pentagon has recently designated Anthropic, a prominent artificial intelligence company, as a supply chain risk. This development, highlighted in a recent episode of “Balance of Power: Late Edition,” raises significant questions about the security and reliability of AI technologies, especially within the context of national security. The designation suggests that the Department of Defense has concerns about Anthropic’s operations, potentially due to factors like its reliance on foreign components, data security practices, or even the ownership structure of the company.

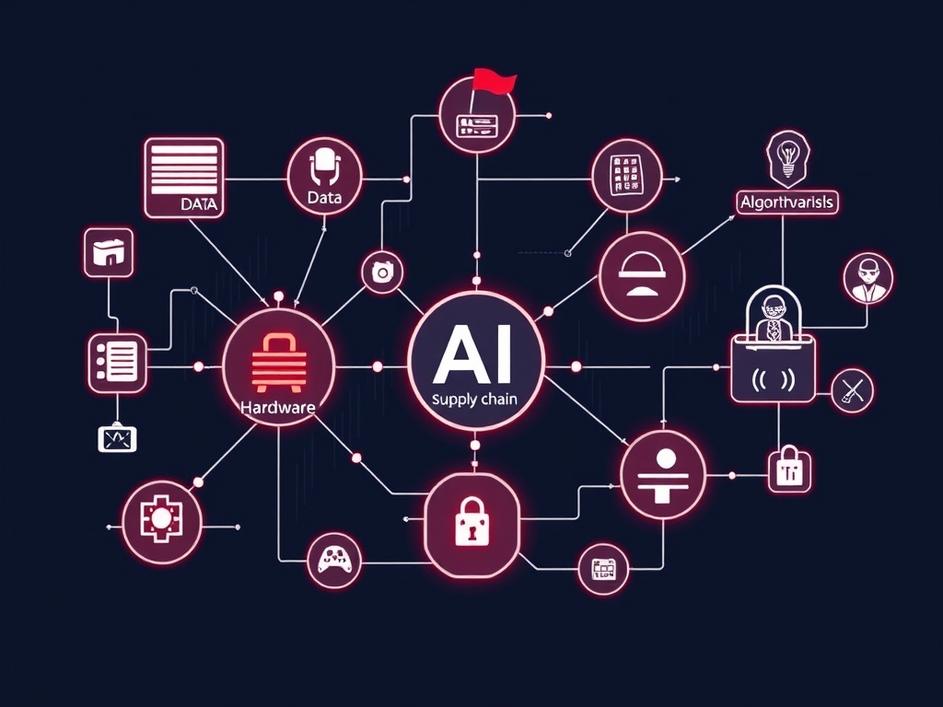

So, what exactly does it mean to be labeled a supply chain risk? In essence, it indicates that there are vulnerabilities within the chain of resources, processes, and technologies that enable a company to function. For an AI firm like Anthropic, this could relate to the data it uses to train its models, the hardware it relies on, the software development processes, or the origin and security of its algorithms. If any of these elements are compromised, it could potentially lead to compromised AI systems, data breaches, or even the introduction of malicious code into critical infrastructure.

This designation has serious implications for Anthropic. It could affect the company’s ability to secure government contracts, attract investment, and maintain its reputation. More broadly, it shines a light on the growing scrutiny that AI companies are facing as their technologies become more deeply integrated into society. Governments are becoming increasingly aware of the potential risks associated with AI, and they are taking steps to mitigate those risks through regulations, audits, and supply chain assessments. The Pentagon’s decision signals a new era of caution and oversight in the AI industry.

At the heart of this issue is the ongoing competition for AI dominance. Countries around the world are investing heavily in AI research and development, recognizing its potential to transform industries and reshape geopolitics. However, this race also raises concerns about security and control. The Pentagon’s action towards Anthropic can be viewed as a proactive measure to safeguard national security interests and prevent adversaries from gaining an edge through compromised AI technologies. It underscores the importance of building secure and resilient AI supply chains that are not vulnerable to exploitation.

Ultimately, the Pentagon’s declaration highlights the urgent need for greater transparency and accountability in the AI industry. Companies must be willing to open their processes to scrutiny, demonstrate their commitment to security, and collaborate with governments to address potential risks. This includes implementing robust data security measures, diversifying supply chains, and conducting regular audits to identify and mitigate vulnerabilities. The future of AI depends on building trust and ensuring that these technologies are used responsibly and ethically. If companies fail to do this, they risk facing increased regulation, reputational damage, and even exclusion from critical markets.

Beyond national security, there’s a significant economic dimension to all of this. The AI sector is booming, attracting billions in investment and creating countless jobs. However, if trust in AI systems erodes due to security concerns or supply chain vulnerabilities, the entire sector could suffer. Businesses might hesitate to adopt AI solutions, consumers may be wary of using AI-powered products, and investors could become more risk-averse. Maintaining the integrity of the AI supply chain is not just a matter of national security; it’s also crucial for ensuring the long-term health and stability of the AI economy.

This situation could be a bellwether for future AI regulations. As AI becomes more integral to critical infrastructure and national defense, governments are likely to implement stricter rules regarding data security, supply chain management, and algorithm transparency. AI companies must prepare for this evolving regulatory landscape by investing in compliance programs and building strong relationships with government agencies. Those who proactively address these concerns will be better positioned to succeed in the long run, while those who resist regulation may find themselves at a competitive disadvantage. The conversation around AI ethics and safety is no longer just academic; it’s becoming a matter of legal and regulatory compliance.

The challenge of securing the AI supply chain is too complex for any single company or government to solve alone. It requires collaboration between industry, academia, and government to develop common standards, share best practices, and address emerging threats. This collaboration should extend beyond national borders, as AI supply chains are often global in nature. International cooperation is essential to ensure that AI technologies are developed and deployed in a secure and responsible manner, regardless of where they originate.

The Pentagon’s decision to flag Anthropic as a supply chain risk is a wake-up call for the entire AI industry. It underscores the need for a more proactive and comprehensive approach to security, transparency, and accountability. As AI continues to evolve and permeate every aspect of our lives, it is essential that we address these challenges head-on to ensure that AI benefits humanity as a whole. The future of AI depends on our ability to build trust, mitigate risks, and foster responsible innovation.

Comments are closed