We are a digital agency helping businesses develop immersive, engaging, and user-focused web, app, and software solutions.

2310 Mira Vista Ave

Montrose, CA 91020

2500+ reviews based on client feedback

What's Included?

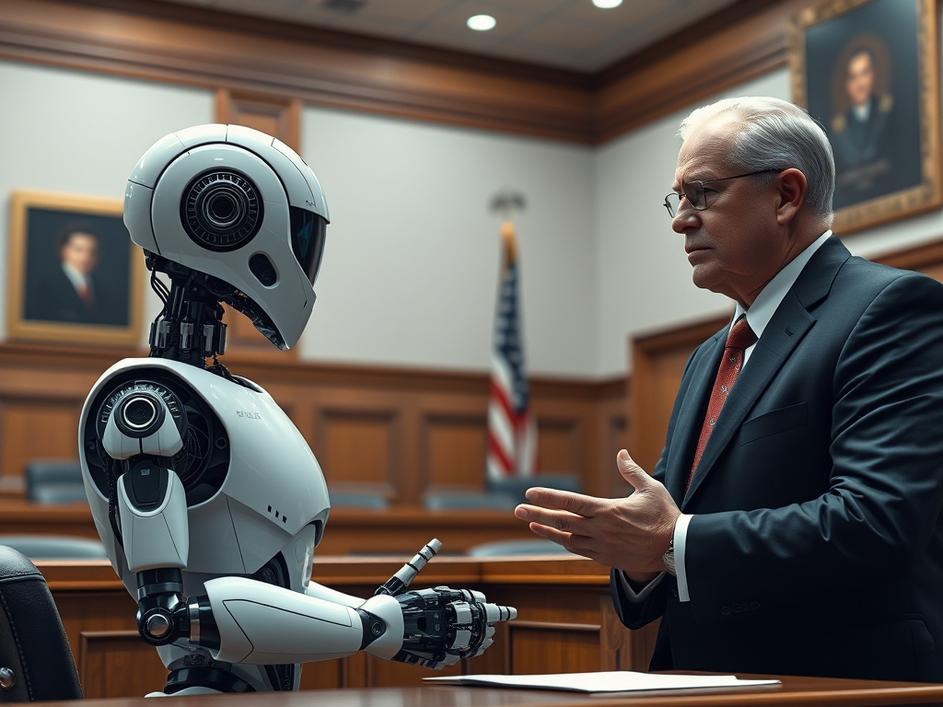

ToggleAnthropic, a company focused on developing safe and beneficial artificial intelligence, is taking on the U.S. government. They’ve filed a lawsuit alleging that the Trump administration unfairly blacklisted them. The core of the dispute revolves around Anthropic’s ethical stance on using AI for potentially harmful applications, like mass surveillance and advanced weaponry. This case raises important questions about government influence on the AI industry and the ethical responsibilities of AI developers.

At the center of this legal battle is a disagreement about how AI should be used. Anthropic has publicly expressed concerns about the dangers of AI in the wrong hands, particularly when it comes to surveillance technologies and autonomous weapons. Their position put them at odds with certain government interests that may have seen AI as a valuable tool for national security or law enforcement. The lawsuit claims that this ethical stance led to the company being unfairly targeted and effectively blacklisted, hindering their ability to operate and compete.

While the specifics of the alleged blacklisting are still unfolding, it likely involves government actions that made it difficult for Anthropic to secure contracts, funding, or partnerships. This could include behind-the-scenes pressure on potential investors or collaborators, or even direct interference with government procurement processes. The lawsuit aims to uncover the extent of this alleged interference and hold the government accountable for any illegal or unethical actions.

This case has far-reaching implications for the entire AI industry. If Anthropic can prove that it was unfairly targeted for its ethical stance, it could set a precedent that protects AI companies from government pressure to compromise their values. On the other hand, if the government prevails, it could send a chilling message to other AI developers, suggesting that they must align their ethical principles with government interests to avoid facing similar consequences. The outcome of this lawsuit will likely shape the future of AI ethics and the relationship between the government and the AI industry.

This isn’t just a business dispute; it’s a fight for the soul of AI. We’re at a critical juncture where the technology is rapidly advancing, and decisions made now will determine how it’s used for years to come. Anthropic’s lawsuit is a bold move that could help ensure that AI is developed and deployed in a responsible and ethical manner. It’s crucial to have companies willing to stand up for their principles, even when it means challenging powerful government interests.

The conversation around AI ethics has been growing steadily, and for good reason. AI systems are increasingly being used in areas that have a direct impact on people’s lives, from healthcare and finance to law enforcement and education. If these systems are not developed with ethical considerations in mind, they could perpetuate biases, discriminate against certain groups, and even pose a threat to human safety. That’s why it’s essential for AI companies to prioritize ethics and transparency, and for governments to support and encourage responsible AI development.

While it’s the government’s job to protect national security and ensure that AI is used responsibly, there’s a fine line between legitimate oversight and overreach. The government shouldn’t be able to stifle innovation or punish companies for having different ethical views. The AI industry needs clear guidelines and regulations, but these should be designed to promote responsible AI development, not to suppress dissent or control the technology for political purposes. This is especially crucial in a world where AI is increasingly being used for mass surveilance by governments. We also have to ask ourselves if it’s ethical to apply it to weaponry.

The outcome of Anthropic’s lawsuit will have a significant impact on the AI industry. Regardless of the result, it has already sparked an important conversation about the role of ethics in AI development and the relationship between the government and the tech industry. As AI continues to evolve, it’s crucial to have these kinds of discussions and to ensure that the technology is used in a way that benefits society as a whole.

Anthropic’s decision to sue the Trump administration is a risky but necessary move. It’s a fight for the future of AI, and it could help ensure that the technology is developed and used in a way that aligns with human values. The case serves as a reminder that ethical considerations are not optional in the AI industry; they’re essential. By standing up for its principles, Anthropic is setting an example for other AI companies and helping to shape a more responsible and ethical future for AI.

Comments are closed