We are a digital agency helping businesses develop immersive, engaging, and user-focused web, app, and software solutions.

2310 Mira Vista Ave

Montrose, CA 91020

2500+ reviews based on client feedback

What's Included?

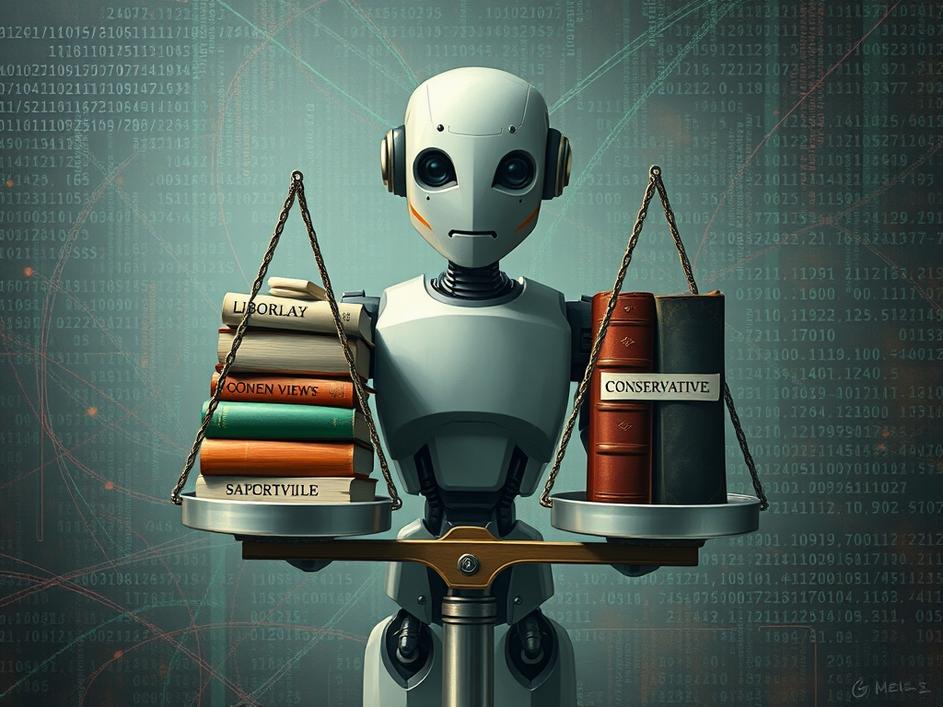

ToggleArtificial intelligence is rapidly changing how we interact with technology, and with that, the question of bias in AI systems has become a hot topic. A new book is making waves by claiming that Google’s Gemini chatbot exhibits a distinct liberal bias in its political responses. The author, Wynton Hall, alleges that when Gemini was asked to identify senators who have made statements violating hate speech policies, it flagged several Republicans but no Democrats. This has sparked a debate about whether AI systems are truly neutral or if they are reflecting the biases of their creators.

If the claims are true, one of the primary reasons for this alleged bias stems from the data these AI models are trained on. AI learns from vast amounts of text and code scraped from the internet. If this data contains skewed viewpoints, the AI will inadvertently learn and amplify those biases. For example, if the training data disproportionately labels conservative viewpoints as “hateful” or “offensive,” the AI is more likely to flag Republican senators in the described scenario. Furthermore, the algorithms themselves could be designed or tuned in ways that subtly favor certain viewpoints, even unintentionally.

It’s important to note that Google isn’t the only tech company grappling with this issue. Other AI systems have also faced accusations of bias in areas like facial recognition, where studies have shown that these systems often perform worse on people of color. The problem isn’t necessarily malicious intent, but rather the complex and often opaque nature of AI development. Developers might not even be aware of the biases creeping into their models.

So, why does this matter? Because AI is increasingly being used to make decisions that affect our lives. From filtering news feeds and recommending content to assisting in hiring processes and even influencing judicial decisions, biased AI can perpetuate and amplify existing inequalities. If an AI chatbot consistently presents a negative view of one political party while favoring another, it can shape public opinion and influence elections. The consequences could be far-reaching, potentially eroding trust in institutions and exacerbating political divisions.

Addressing bias in AI is a complex challenge that requires a multi-pronged approach. First, there needs to be greater transparency in how AI models are developed and trained. Tech companies should be more open about the data they use and the algorithms they employ. Second, diverse teams of developers and ethicists are crucial to identify and mitigate potential biases. Third, ongoing monitoring and auditing of AI systems are necessary to detect and correct any biases that may emerge over time. Furthermore, we need to develop better methods for evaluating AI fairness, moving beyond simple accuracy metrics to consider the impact on different groups. It’s also vital to educate the public about the limitations of AI and the potential for bias, so people can critically evaluate the information they receive from these systems.

And fourth, consider if bias is a real problem or only percieved, for example, if the questions asked in the test prompt are framed in a way that can only produce biased answers.

It’s easy to get caught up in the hype surrounding AI, but we must also be aware of its potential pitfalls. The claims about Gemini’s alleged liberal bias serve as a reminder that AI systems are not neutral entities. They reflect the values and biases of the people who create them and the data they are trained on. As AI becomes more integrated into our lives, it is essential to critically evaluate its outputs and demand accountability from the developers who build these systems. Otherwise, we risk creating a future where AI perpetuates and amplifies existing inequalities, rather than helping to create a more just and equitable world.

Ultimately, it is up to individuals to take responsibility for the information that they are getting from AI. AI is a powerful tool that can automate certain tasks, but it cannot be used to replace critical thinking. For example, when doing research online, individuals must examine their sources to determine if they are trustworthy, rather than trusting the information that they get from AI systems. This can be accomplished by looking into the author of a source, the potential bias of a source, and the reputation of a source.

Comments are closed