We are a digital agency helping businesses develop immersive, engaging, and user-focused web, app, and software solutions.

2310 Mira Vista Ave

Montrose, CA 91020

2500+ reviews based on client feedback

What's Included?

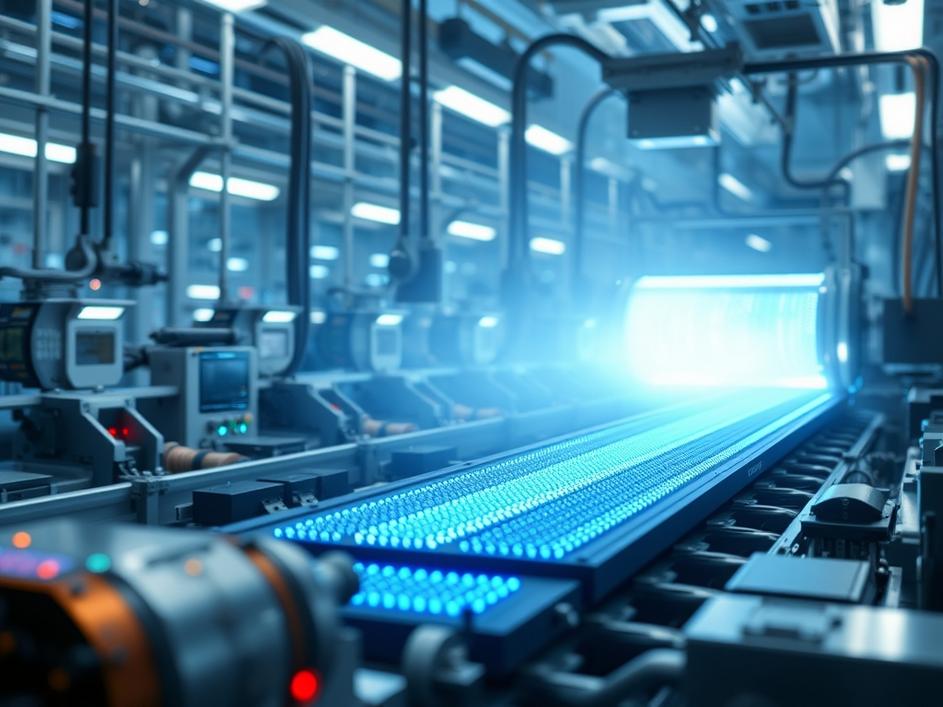

ToggleArtificial intelligence is everywhere. From chatbots to self-driving cars, AI is rapidly changing our world. But behind the scenes, something critical is happening: AI’s massive processing needs are creating an unprecedented demand for memory chips, specifically High Bandwidth Memory (HBM). It’s like a gold rush, but instead of digging in the dirt, companies are scrambling to secure enough of this specialized memory to power their AI ambitions.

Think of HBM as a super-fast lane for data. Traditional DRAM (Dynamic Random-Access Memory) simply can’t keep up with the data-intensive demands of AI workloads. HBM stacks multiple memory chips vertically, creating a much wider and faster data pathway. This allows AI processors to access the vast amounts of data they need with incredible speed and efficiency. Without HBM, AI systems would be severely limited, like trying to run a marathon while breathing through a straw.

The surge in AI development caught many by surprise. Companies underestimated how quickly AI models would grow in complexity and, consequently, how much memory they would require. HBM is also notoriously difficult and expensive to manufacture. It requires specialized equipment and processes, limiting the number of companies capable of producing it. Imagine trying to build a skyscraper with only a handful of construction crews – that’s the situation we’re in with HBM.

The HBM shortage is sending ripples throughout the entire tech industry. Companies developing AI hardware and software are struggling to get their hands on enough memory to meet their needs. This is driving up prices and delaying the rollout of new AI products and services. It’s affecting everyone from cloud providers to smartphone manufacturers. The companies that can secure a steady supply of HBM will have a significant competitive advantage in the AI race. Smaller players might be squeezed out altogether, unable to compete with the giants who have the resources to lock down supply contracts. We could see increased consolidation in the AI space as a result.

Memory manufacturers are the obvious winners. Companies like SK Hynix, Samsung, and Micron, who are at the forefront of HBM production, are seeing their revenues soar. But the situation is more complex than it seems. These manufacturers are also facing challenges, including the need to invest heavily in expanding their production capacity. And there’s always the risk that the demand for HBM could eventually plateau, leaving them with excess capacity. So, while they’re benefiting now, they need to carefully manage their investments to avoid overextending themselves.

The HBM shortage also has geopolitical implications. The production of advanced memory chips is concentrated in a few countries, primarily South Korea and the United States. This creates a potential vulnerability, as any disruption to the supply chain could have a significant impact on the global AI industry. Governments are increasingly aware of this risk and are taking steps to encourage domestic chip production. We might see new policies and incentives aimed at boosting semiconductor manufacturing in other regions, reducing reliance on a limited number of suppliers. This is a complex issue with far-reaching consequences for national security and economic competitiveness.

The HBM shortage is likely to persist for the next few years. Memory manufacturers are working to increase production capacity, but it takes time to build new fabs and ramp up production. In the meantime, companies will need to find creative ways to optimize their AI models to reduce their memory footprint. We might see a greater focus on techniques like model compression and quantization, which can make AI models smaller and more efficient. There’s also ongoing research into alternative memory technologies that could eventually replace HBM. The pressure to find solutions is immense, and innovation is likely to accelerate as a result.

While the current HBM shortage is a challenge, it’s also a sign of the incredible potential of AI. The fact that companies are willing to pay a premium for HBM demonstrates how valuable AI has become. In the long run, the shortage will likely be resolved as memory manufacturers ramp up production and new technologies emerge. But the underlying demand for memory will continue to grow as AI becomes even more pervasive. This means that memory will remain a critical resource for the foreseeable future, and companies that can effectively manage their memory supply will have a distinct advantage in the AI era.

The HBM shortage is more than just a temporary inconvenience; it’s a symptom of a fundamental shift in the tech landscape. AI is driving a new era of computing, one that demands ever-increasing amounts of memory. Companies need to adapt to this new reality by investing in memory efficiency, diversifying their supply chains, and exploring alternative technologies. The future of AI depends on it.

Comments are closed